There’s no doubt that artificial intelligence — specifically, generative artificial intelligence — has the capability to radically reshape the education landscape. Yet, even as many schools begin to implement the technology into administrative and teaching workflows, teach it to students and encourage users to explore its possibilities, still other schools aim to block it entirely.

“There is a huge disparity between people engaging with it and people hoping it goes away,” Bryan Krause, CDW’s senior national school safety strategist, said at CoSN 2025. He compared AI to a bike, which can’t function without someone to steer and pedal it.

Click the banner below to explore tech solutions inspired by CoSN 2025 insights.

Ken Shelton, the event’s opening keynote speaker, said that he tries to dig deeper when he hears about schools shutting AI out entirely. “Does that mean you blocked spellcheck and your email spam filters?” he asked, urging listeners to understand how these systems work.

Still, he admits that, while human beings in K–12 schools can use AI as a powerful learning tool, it’s vital to use the technology with an appropriate understanding of its potential pitfalls and biases.

Unraveling AI’s Possibilities and Potential Biases

One of the most common concerns from schools and parents about the use of AI in the classroom is the opportunity for students to cheat, Shelton said. “I don’t call it cheating. Instead, I say ‘to be detrimental to or compromise their learning.’ What is happening to lead a student to compromise their learning?”

He said that pedagogical approaches and assignments need to evolve to more appropriately prepare students for the world they’re part of: “It’s easy to gravitate toward things that make things easier.”

However, he stresses that anyone using AI — whether a child or an adult — needs to understand how it works, including its inherent biases.

While the bias exists in all generative AI models, it’s easiest to identify in image generators, Shelton said. Even the “safest” AI models will show their biases in 15 seconds, he argued, and all he needs to do is ask it to generate an image of a teenager wearing an ankle monitor. “Take a guess at what the images were. The images were individuals that look just like me,” he said.

“Generative AI works based on patterns to make predictions,” he explained. “The more blanks we leave in, the more bias will fill those blanks.” K–12 leaders and educators need to understand how these systems work so they can get to a place where they’re using AI to adapt learning experiences to students’ lived realities, strengths and needs.

MORE ON EDTECH: What is digital citizenship for students in 2025?

Seeing Both Sides in an AI Ethical Dilemma

AI can also create ethical dilemmas, through both the outcomes of its implementation and its very nature, presenters said on Wednesday in a session titled “Ethical Dilemmas in AI.”

“Dilemmas are not easy. There are usually two pulls and no right or wrong answers, and typically, the stakes are pretty high,” said Jessica Fields, a 21st century learning coach at Grandview Heights Schools in Ohio.

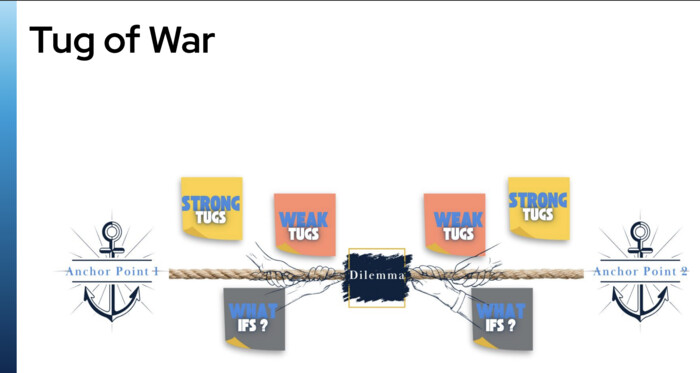

Fields shared a tug-of-war method from Project Zero’s “Thinking Routines Toolbox” to use when making decisions involving a dilemma, adding that “it’s important to have tugs on both sides.” While this session focused on AI dilemmas, the method applies to any dilemma school leaders are facing.

A diagram presented in the CoSN 2025 session “Ethical Dilemmas in AI” illustrates the tug-of-war thinking routine.

Fields also noted that decision-makers should consider all perspectives if they choose to implement this process. Stakeholders at all levels should be represented or considered.

Presenters — who also included Delaware City (Ohio) School District’s CTO Jennifer Fry and Director of Secondary Education Aaron Cook, and Grandview Heights Schools’ CTO Christopher Deis — then had CoSN 2025 attendees talk through various hypothetical dilemmas concerning AI and ethics. For example:

- A school integrated AI into its workflows and found that it has made some staff positions redundant. However, these staff members have strong relationships with the students and positively impact the school’s culture. Should the school fire the employees?

- Face recognition technology detects students’ and staff members’ moods and body language in the school building, helping to de-escalate conflicts and avoid violent incidents. However, the monitoring is done without explicit consent. Is it ethical for the school to use this system without consent, given its safety benefits?

- A teacher becomes dependent on AI for lesson planning, lecturing and giving feedback to students. This leads to an increase in student performance but a decrease in the teacher’s engagement with students. Does this compromise the quality of the students’ education? Is it ethical for the teacher to use AI in this way?

Participants discussed financial angles, cybersecurity concerns and the general importance of maintaining a human element in the scenarios. Conferencegoers advocated on each side of the various dilemmas.

“Your goal is to see both sides so that you have the best information,” Fields said.

Visit this page to catch up on all of our CoSN2025 coverage, and follow us on the social platform X @EdTech_K12 to explore behind-the-scenes looks using the hashtag #CoSN2025.