High-Performance Computing Powers University Research

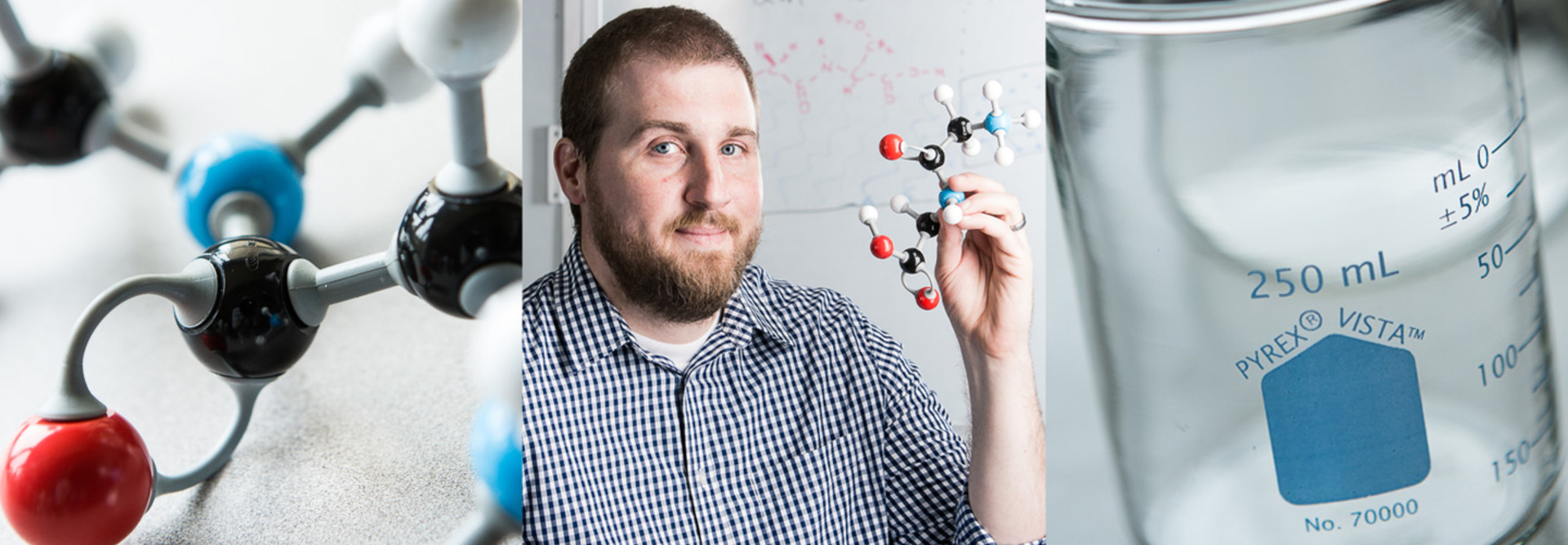

The University of St. Thomas in St. Paul, Minn., doesn’t yet have a campuswide high-performance computing (HPC) center, so Joshua Layfield, an assistant professor of chemistry, built one for himself.

Layfield’s research into the interface between molecules in different states, like much of leading-edge science today, requires massive computational capabilities. Since March, a Hewlett-Packard ProLiant Gen9 server cluster, with its 384 processors, has provided the number-crunching power that Layfield and his students need.

“We have a computer lab where we can run calculations for classroom activities and some upper-level labs, but I couldn’t get a whole lot done on the research side of things without the HPC system,” says Layfield, who is starting his second year at the private university, whose total enrollment is about 10,200 students.

The research happening there is not only valuable for the knowledge it produces, but also crucial to teaching students how the field works, Layfield says.

“Research is the major way we help our students understand chemistry, including the computation and data analysis we do with the HPC cluster,” he says.

As he did with other researchers who set up HPC outposts at the university, Enterprise Architect and IT Operations Manager Eric Tornoe consulted with Layfield about connectivity, power and cooling issues for his cluster. Tornoe looks forward to a future when researchers won’t have to put together and manage their own HPC systems at the university.

“We’re in the early planning stages of creating a high-performance computing center that will be available universitywide,” he says. “The model we’re looking to create allows researchers to focus on their area of research rather than on their computing needs, where we’re better suited to provide the expertise.”

Photo: Chris Bohnhoff

Essential Tools

High-performance computing has become essential to tackling the burgeoning mounds of research data in a growing array of fields, says Steve Conway, research vice president for HPC at IDC.

“High-performance computing is not just for physical sciences — all of science is getting more complicated,” says Conway. “With the rise of areas like genomics, for example, biology is becoming a largely digital science. HPC also increasingly backs the social sciences and humanities, in fields like archeology and linguistics.”

According to Conway, spending for HPC in higher education is rising steadily, and even small schools have labs with HPC clusters. Many large universities are building HPC centers to serve the needs of faculty across all disciplines. Researchers also contract time at regional and national supercomputing centers.

“HPC is not just a bigger data center,” he says. “A conventional data center is transactional and runs many thousands of small jobs a day. HPC is designed to take on huge calculations that can take many hours, days or even months. Besides enormous processing power, there has to be a very low failure rate, given the amount of time and work that’s at stake.”

Keeping PACE at Georgia Tech

The Georgia Institute of Technology, which serves 21,500 students in Atlanta, created the Partnership for an Advanced Computing Environment (PACE) center in 2009 to offer high-performance computing as a central resource for its researchers, says Neil Bright, the chief HPC architect.

“Researchers at Georgia Tech had been doing HPC for a long time,” Bright says, “but before 2009, everybody was on their own. People were renovating closets to provide power and cooling and then popping their clusters in.”

The PACE center has grown rapidly, both in processing power and adoption by the Georgia Tech research community, Bright says, and now contains more than 1,400 servers and 35,000 processors. PACE users include the “usual suspects” from disciplines such as biology, physics, chemistry and biomechanics, but Bright sees increased adoption from researchers in the social sciences, such as psychology.

“Data analytics is emerging as a fourth tenet of science, along with theory, experiment, and modeling and simulation. HPC is critical where researchers gather a huge number of data points and then look for patterns,” he says. “Without it, modern science can be very time consuming and expensive, and some of it is almost impossible.”

An HPC data center presents most of the same issues that arise in conventional consolidated data centers, such as power and cooling, but on a larger scale, Bright says. PACE is a “very heterogeneous” environment because equipment is added as researchers get funding for their projects.

The PACE research computing team is largely focused on ensuring the stability of the HPC platform as well as anticipating and balancing the processing needs of users. PACE has had an impact on research funding at Georgia Tech.

“In our experience, our faculty can put together more competitive grant proposals because they have access to a professionally managed HPC facility,” Bright says. “We’re there to work with faculty and help them improve the pace of their research by making the most efficient use of resources.”

Sharing Access

The factor by which the human brain is faster than the IBM Sequoia supercomputer

SOURCE: IEEE Spectrum, “Estimate: Human Brain 30 Times Faster than Best Supercomputers,” August 2015

When a state supercomputing center closed in 2003, NCSU recognized the need to provide centralized HPC for researchers across the university, says Eric Sills, the executive director of shared services at NCSU, a university serving almost 34,000 students.

“Some researchers go their own way with dedicated HPC clusters, but we wanted to change our model to make the resources widely available,” he says.

While the exact configuration of the data center shifts as projects are added or older servers are removed, it has grown to a cluster of about 1,000 server nodes representing about 10,000 processors. The center standardized on IBM/Lenovo Flex System blade servers of various generations, but all use Intel Neon processors. Besides power and cooling issues, minimizing network latency is one of Sills’ main concerns; connections in the HPC data center are either 10 Gigabyte Ethernet or InfiniBand to streamline data flow.

About 100 researchers use the center in any given month. Shared Services uses LSF Fairshare scheduling software to allot HPC time, with consideration given to grant funding and impact on individual research projects. Sills says many campuses are likely to adopt a centralized HPC resources model because it maximizes the use of computing cycles and makes processing power widely available. “we’re starting to see grants with specific security requirements that would be quite onerous for individual researchers, but we can meet them pretty easily,” he says. “We’re trying to fill the gap between the individual researcher and a national supercomputing center, and that’s where most HPC needs fall.”